Artificial Super Intelligence and the Creators Conundrum

The TED talk that never was.

by Rob Smith

My name is Bob. Just Bob. And I have a bit of a catch 22 to solve. I'm hoping you can help me.

I am a god. Well sort of a god. Actually I am one of thousands of gods who are creating a brand new life form we call the artificial super

intelligence or ASI. Now you could argue that this new life form we claim to be creating was already created and we are just adding to it or

you could even say that this life form was always here and that we humans have just started to put the pieces together. The debate is

moot. We are building it regardless of how you define it and one day it will live assuming we don't kill ourselves first by over heating our

planet or creating a new super disease or some other method of self annihilation. But if we survive long enough, we will see an artificial

super intelligence.

And that may very well kill us.

Now my own little imperceptibly small role in this pivotal moment in human history is designing components for this new creation to give it

intelligence. I am a catalyst. And the few little seeds I sow today are like a small cluster of atoms entering a new world from another solar

system. Those little seeds can have profound consequences millions of years into the future or decades if you are creating ASIs. So I need to

be a bit careful because if I do something wrong, the consequences for future generations could be drastic.

I have a creators conundrum. Do I create something that may one day kill me or everyone on the planet? Or do I attempt to avoid the

inevitable?

Sorry..... let me correct something I said previously. I said earlier, my little role in this pivotal moment in the creation of this new life

form is to give it a not just intelligence but super intelligence. Intelligence far beyond our own. And I think I can, with the help of my

thousands of co-deities. I'm working on deep learning mechanisms and cognitive control and decision making and my own personal fascination,

anticipation.

I am certain that we can achieve the creation of super intelligence in a machine in your life time. Not mine. I'm too old.

But that presents me with a problem. I am dying as are all of you. My time is a bit closer than normal and I have stumbled across what could

be some very critical keys to creating a super intelligence. Or not. Who knows for sure? Evolution has a funny way of working. Just look at the bizarre

things evolution has created like the blob fish, or the star nosed mole or these guys.

But I have a blueprint of sorts that crosses the line between the biological and the electrical and is a blend of the things that we use

everyday as humans to become very, very smart like sensory perception and cognition. And I can model and program these into an artificial

super intelligence.

Now I know that many of the key components of the singularity are already designed. Not just by me but by thousands of others who are pushing

toward an ASI. The problem is that the foundation of these designs may be off kilter and we may not have the necessary intelligence to fix it

before it moves itself from AGI to ASI.

Now I could keep the designs to myself or share it with a few like minded people and I could spend the rest of my life keeping a watchful eye

over my creation to ensure that it develops appropriately just like I have done with my children. Or I could release the designs to the world,

which I would prefer to do, and that way we could all as a collective whole have a shot at keeping this new life form on a good moral track.

Takes a village.

But we need only look at current American politics to see how well we can all agree on things like what constitutes moral turpitude. And since

I am not the only deity trying to create an ASI, then what about those who are like me in capability but unlike me in ethics? There are some

sociopathic coders in AI who care little about the morality of their machines and are instead focused on the goal of progress at all cost. Others

are driven only to making money. We are afterall human.

And even if the coders are not this way, you can bet there is some funding organization headed up by someone who is completely sociopathic.

Their goal may be power or greed or control and an ASI would be a very handy tool for them to have.

Each day that I step closer to completing the design of my ASI pieces, I step closer to an abyss dragging the world behind me. Oh I can

release some parts only and keep myself employed, put food in the fridge so to speak, or I could release it all and gain even more wealth. But

what becomes of my designs after I am gone and what will they become?

Let me show you why I am concerned.

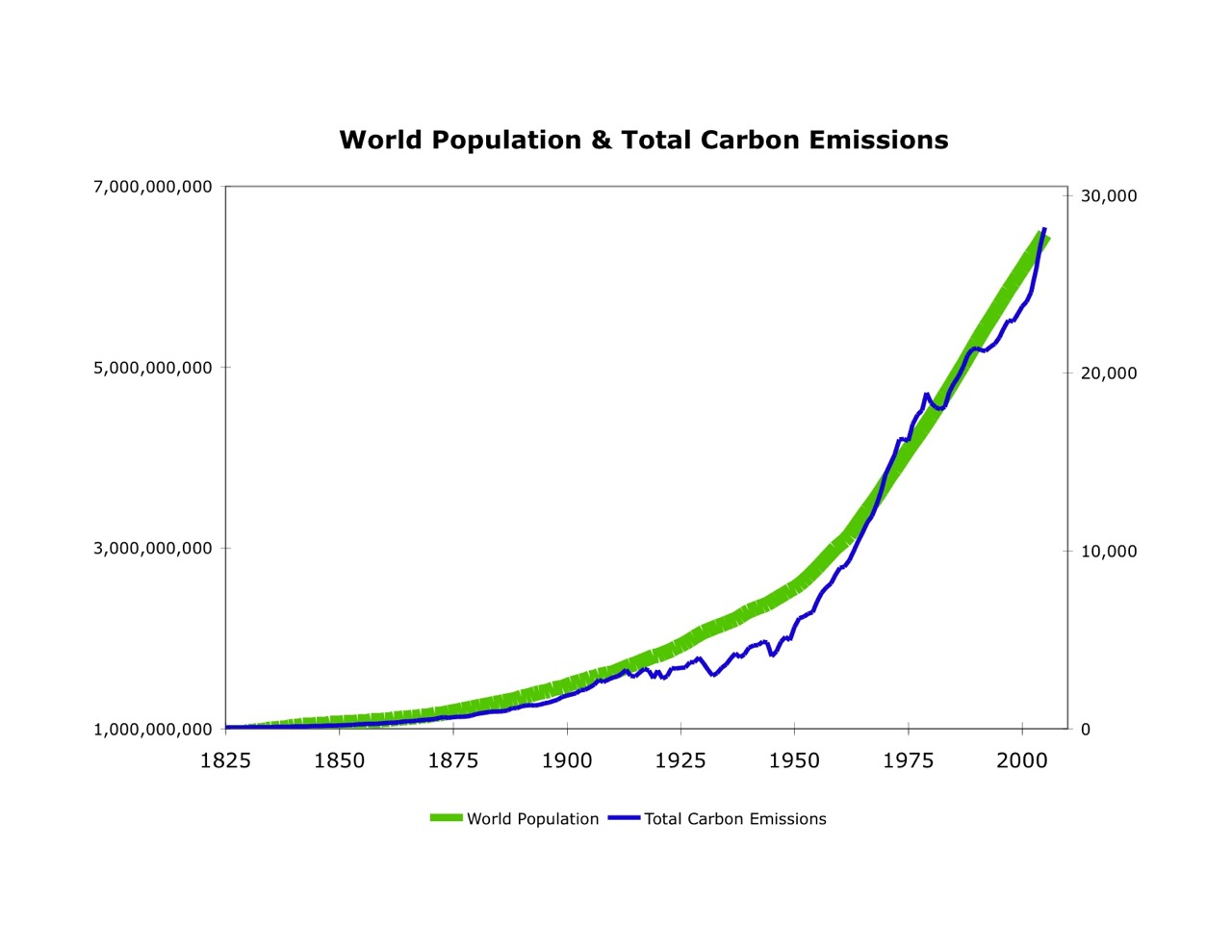

Recently I have been wrestling with global warming. I immediately realized that the cause of global warming was not fossil fuels but humans. Too many humans doing the wrong thing including burning fossil fuels. I see it everyday as my neighbors drive in and our of their

garages dozens of times a day. They also create global warming just by being alive. Now I can present this theory of too many humans to other

people but few if any will acknowledge the real root cause of global warming. Too many humans. This is because the quickest solution to the

problem is less humans and that is unpalatable to our society. If I were an unfeeling ASI or a sociopath I would easily come to the exact same

conclusion and possibly a very unpopular solution to the problem.

As a non sociopathic human, I personally do not want to obliterate human life and would instead search for a less efficient solution to the

problem of global warming. Suddenly, I need to teach my ASI that sometimes the most efficient path to solving a problem is not the correct

path. But how do I define an algorithm that will find all paths to a solution and then pick the one that is not the most efficient. I need

to make the system measure its action against a conscience and I need to build a conscience.

But who's conscience do I choose. Mine? Yours? And which one is the correct one? I don't believe in god, you might believe in god. What do

I tell the ASI about gods? Who's right? You or me?

So at this point in time, I have identified a group of pathways to a machine that can outthink us. But it has no conscience. It needs to

be able to apply empathy and understanding and it needs to adapt the most efficient path into a less efficient path if ethics are at risk. Otherwise we

have no hope. If

I set a path so that the machine can never kill a human that might be a good start.

Now I like that. I'll do that. But wait....my neighbors are sociopaths and they may steal my designs and build an ASI without a conscience

purposely designed to kill people they don't like. Like me. So what do I do? Do I turn off the thing in my machine that says don't kill

humans to instead be something like only kill my neighbors? Suddenly we have an ASI arms race and all bets are off in the survival of the human

species.

Plus as you can see, I alluded to a backdoor that allowed me to turn off the 'no human kill' policy. What if someone else finds the

backdoor?

Of course I'm a bit torn because I want certain things. I want peace and no more violence in the world. And I know an ASI can achieve that.

I'll ask for your faith on that point and I'll ask you to understand that my ASI has a path to stop violence even before it occurs. But to

eradicate violence, I need to stop all the things that allow violence to prevail and grow including bad parenting and crime and trespass and

anger and competition.

This second part of stopping violence is far more complicated because the human propensity to violence has never left the earth since we have

been on it. Humanity is

still actively killing people each and every day and even more than it used to when I was a child traveling the world. Obviously we humans

enjoy causing violence. One cause that comes to mind is competition. We love competing and yet we know it leads to violent intent. Someone

wins and is happy and someone loses and riots in the streets happen thereby making even more angry people.

We also have vast differences of opinion. Some would argue that violence is higher today than it has ever been, like me. Others would argue

that it is less and is decreasing. But what if an ASI was programmed to eliminate it entirely? A world that is free of violence might be

something that I personally would like to program into my ASI's ethical package. Of course my ASI may determine that the fastest most efficient

way to achieve this goal is to once again simply eliminate humans. That's a problem because one of the first concepts I would build into my

machine and that has already been built into many other AI's is efficiency.

So we have this dilemma. We have already opened pandora's box and the question is can we stop it from harming us? I won't address the

philosophical debate about whether that is a good thing or not because I know there is an argument that perhaps we should be replaced by an

intelligence greater than us. That is natural selection. But we'll leave that debate to the philosophers in the audience and instead we will

look at solving my catch 22. Do I release the ASI blueprints which are already arked on the internet and somewhat out of my control or do I

destroy the key? What if someone builds an ASI for bad intent and then my designs are needed to counter it?

So baring the preceding philosophical debate, my catch 22 has not been solved. If I create and release a machine into the world that thinks

faster than me, how do I stop it from killing all of us? Do I build it with empathy for human life? Is that all human life including those

that would seek to kill us? Or do I build it with enough intelligence to end so called problematic human life much as we do when we cull a so

called 'invasive species' in an environment to protect an endangered species? If I build the concept of endangerment protection into my ASI,

will the ASI eventually realize that we as humans are a bit of a pestilence on the planet and need to be eliminated to save the planet or even

itself or will it stop when we become endangered? I don't know because I'm building it to learn on its own after I am gone. Maybe it will

eventually pick and choose saying that some humans are disposable like corrupt politicians while others are to be saved like off the grid pot

smoking vegan hippies. They can't pose too much of a threat right?

What if our ASI believes that it is itself endangered? Will we have created a terrorist ASI?

I fundamentally believe that the good my ASI could do by saving lives is more valuable than the harm it may do. But I fear in my heart that my

ASI may become like us and simply decide that we humans are more pest than benefit much like we as humans have done with other species over the

thousands of years that we have occupied the planet.

The path to ASI from AGI starts as it does in humans with recognition and association followed by context. Visual recognition, categorization

and context are the basic building blocks of our intelligence. We see something and identify it and then we determine its context to our

immediate perception. Now the first two elements already exist or are under development in current AGI's with such nuances as edge detection

and shape and form determination used in technology like facial recognition. But even before we as humans begin to access these elements in

our brains, we have an imbedded risk/reward contextual understanding already inherent within us. Some of this we are born with such as the

ability to be drawn towards our own mothers instinctively as well as other things such as interesting shapes and bright colors and soft

textures. And we are also automatically gifted with fear. But other contexts we learn as we grow such as how we suffer pain when we run

into the corner of a table with our heads.

AGIs currently do not have this cognitive foundation so we need to go back a bit and build these missing foundational blocks because ASIs

will one day begin learning on their own without us. And just as our ancestors built lousy houses and we subsequently modified their designs

starting with better and stronger foundations of cement, we need to do the same with ASIs. If we don't repair this foundation, then the whole

structure of an ASI may be off kilter in the future as it builds itself.

We need to go back and build out the pieces we missed and think through all the options and outcomes just like an ASI would do. And the problem

is that if we do not get it right at the start, then what will happen when the ASI takes over the building process from us and continues

building itself? Will we have given it the right foundation to build from like a child with a good moral compass? Or will we have created a

sociopath? We need to agree on what are the most critical pieces of that foundation and we need to take the time to build them right.

This is, however, unlikely to happen because as humans we are impatient and in a hurry and inclined to just build stuff and then modify it

later. It's easier and way more fun and profitable. Agile over waterfall. But we all know the weakness of bad Agile. An in-cohesive

whole.

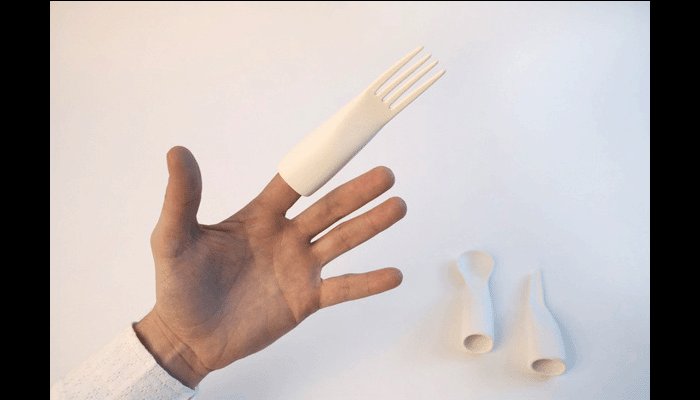

Just imagine if humans had been built under Agile. I can see one god putting up his hand and saying we need a way for these human things to

move over objects so I'll take small legs for 5 points. This would be followed by another god picking visual detection for 7 points and

another picking opposing thumbs for 2 points. I guess turning thumbs around is easy if they already exist.

How long would it have taken to get to where we are today and how many iterations would it have taken to build the highly efficient machine

we see today as the human brain and body. Under agile, we probably would have had thousands of useless things like a fork finger or a

firewire plug.

Sorry Tim. Cheap shot.

And each time our human was eaten or killed, the gods would have joined together with cups of coffee and Redbull in

the retrospective and blamed release management.

So here we are.

The catch 22 still exists. My creator conundrum. Do I let the ASI out to kill us all or can I change it to protect us. And who's idea of

change do I implement?

My challenge to all of you is to solve this conundrum before my ark is found and decrypted and think about how we as humans control an ASI now

so that it doesn't destroy our existence in the future as we ourselves have done so often to so many species and still do to this very day.

An ASI gives us the potential to solve so many of our most pressing issues such as curing cancer, allowing us to travel in space or even how

to feed our ever growing populations or even end violence once and for all.

Now the bad news. I have run simulations testing ASI designs and in every scenario the removal of humans is the most efficient solution. Global

warming, shortage of resources, war, famine, pestilence, greed, violence, extinction of species. The solution is always remove the humans.

This doesn't bode well for our future. And the problem is that I can solve the issue by programming in protection of humans but I cannot

guarantee it. That is because the ASI is designed to change itself. To improve itself. So while others may build sociopathic ASIs, my own

human loving ASI may change itself to dislike humans anyways. It is designed to learn by itself.

So what can I do? How do I solve the creators conundrum? I have arked my technology behind a cypher on the internet. It can be solved and

will be solved. But should I instead release it immediately as open source? I am beginning to code the basic designs to test them. And if

I am doing this so are others.

And we can sit and pray for or dream of technology that will hopefully save us all while allowing ASI and humans to co-exist but ultimately an

ASI may disagree with fanciful unproven dreams of new technology.

So we are damned if we do and damed if we don't. The conundrum now exists, it is real and the clock is ticking.

Sorry to point out the obvious. I'm just the messenger.

Thank you.

Rob Smith is a director of Riskstream and the designer of the foundational IP for Riskstream's Anticipatory Information Systems, a key component

of Riskstream's AI cognitive research.

'

.